What is an AI product - and what isn't one?

An AI product is one where a learned, probabilistic system - rather than hand-coded deterministic logic - is responsible for generating, predicting, classifying, or deciding something that directly shapes the user experience or business outcome. The defining characteristic is not that the product 'uses AI somewhere' but that the product's core value depends on a model making judgments that cannot be fully specified in advance. In the Innovation Mode methodology, this distinction determines which product specification approach to use: traditional products need traditional PRDs; AI products need the extended AI PRD framework with evals, guardrails, and model dependency documentation.

- The litmus test: if you replaced the AI component with a hand-coded rules engine, would the product still deliver its core value? If yes, it's a product using AI. If no, it's an AI product

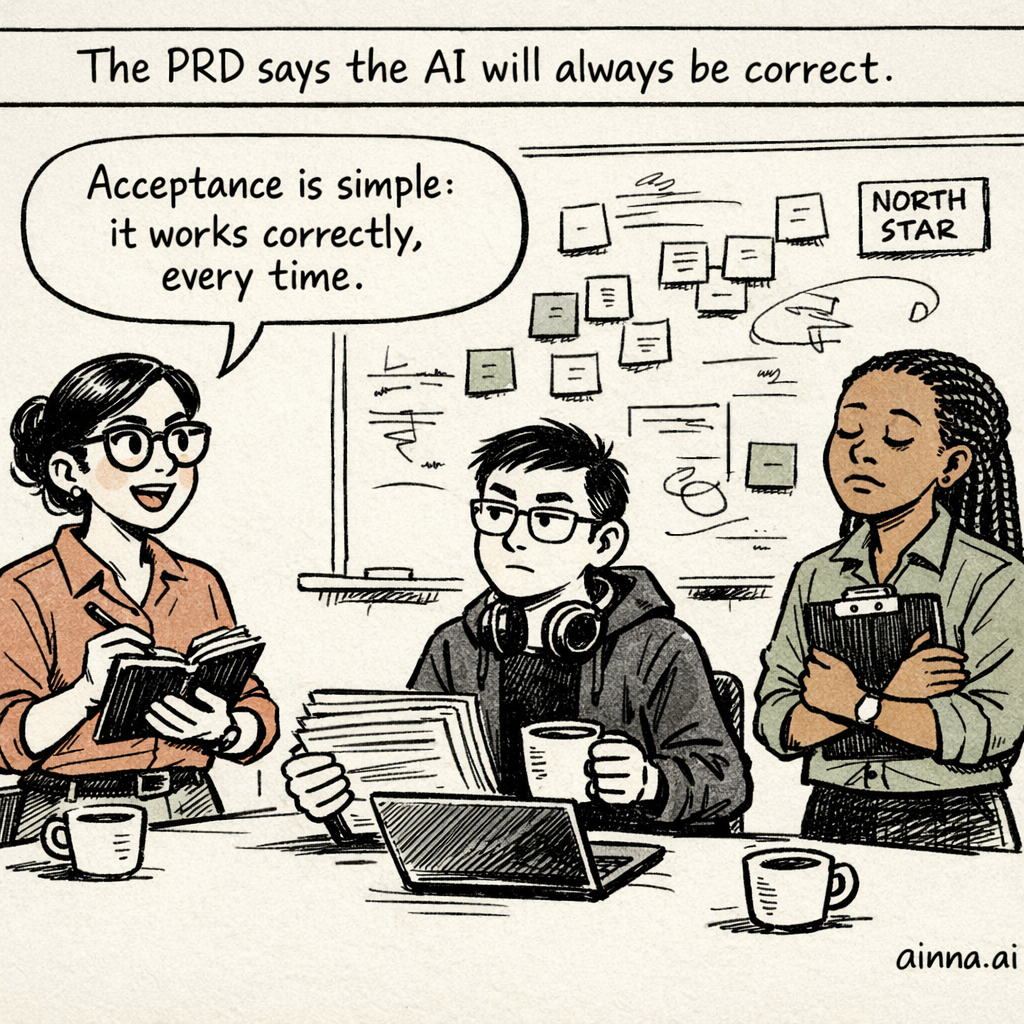

- AI products have probabilistic outputs: the same input can produce different outputs, and 'correct' is a spectrum rather than a binary state

- AI products exhibit emergent behavior: the system may do things - good and bad - that were never explicitly programmed or anticipated by the product team

- AI products have a training-inference lifecycle: their behavior changes not just through code deployments but through model updates, data changes, and fine-tuning

- AI products introduce novel failure modes: hallucinations, bias amplification, adversarial vulnerabilities, and model drift - none of which exist in traditional software

- Traditional software breaks visibly (error messages, crashes). AI products can fail silently - producing confident-sounding but wrong outputs that users trust

Understanding whether you're building an AI product versus a product that uses AI is the single most important framing question for your PRD. It determines which sections you need, how you define quality, how you specify acceptance criteria, and how you plan for the product's evolution. Get this wrong, and you'll write a PRD that fundamentally mismatches your product's nature.