Is AI replacing innovation jobs?

Yes, in specific layers. AI is absorbing the ideation, prototyping, and implementation work that traditionally defined innovation roles. What survives is the synthesis, judgment, and execution layer, and that work consolidates into a smaller number of roles I call the Intrapreneur in Innovation Mode 2.0.

- I have run innovation work for 25 years across enterprise technology, pharmaceuticals, professional services, and venture-stage startups. The pattern is consistent: AI generates ideas in seconds, builds functional prototypes in minutes, analyzes market opportunities in hours. The roles built around those activities are compressing

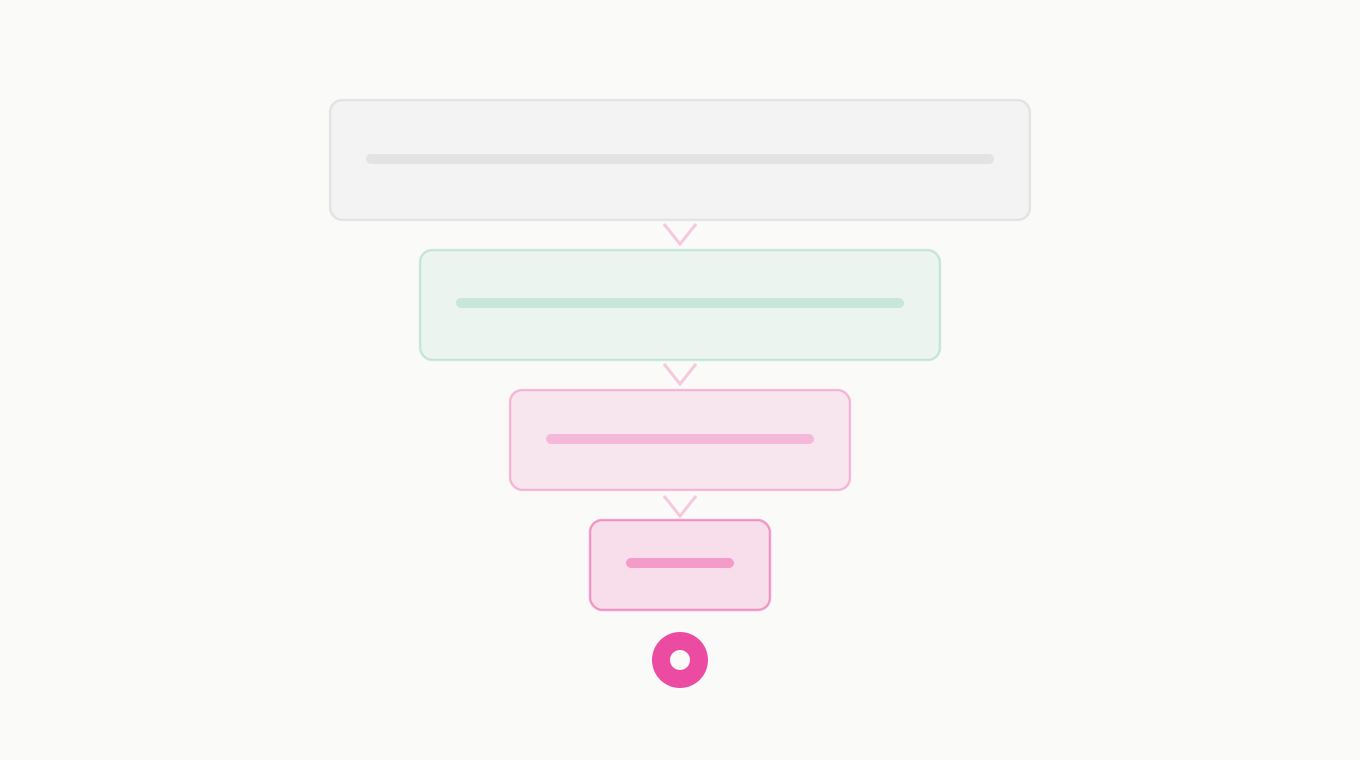

- Three roles compress into the Intrapreneur: the hackathon participant valued for technical execution, the brainstorming facilitator valued for idea generation, the innovation PM valued for stage-gate management. Those roles still exist in name; their economic value has migrated

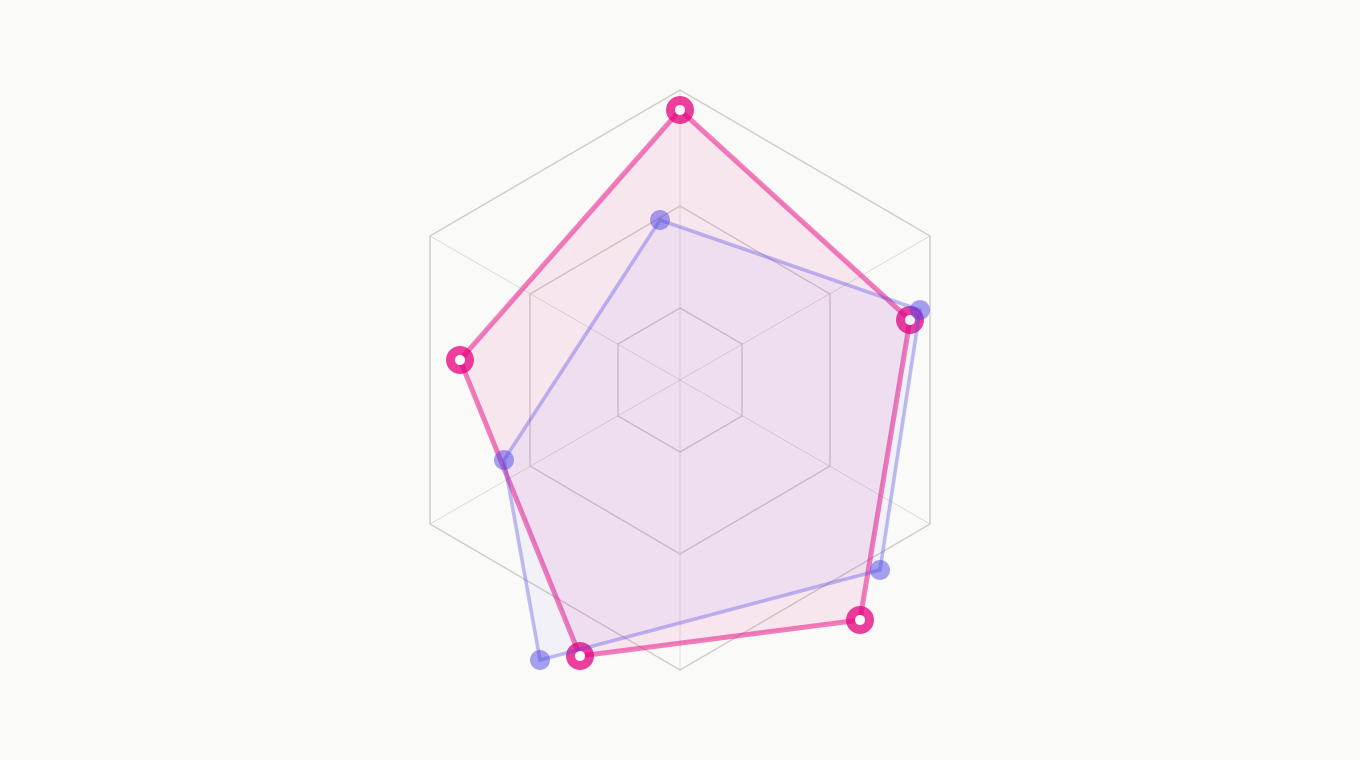

- What the Intrapreneur owns: judgment to recognize a real opportunity, conviction to commit resources, product sense to shape it, execution discipline to move it through validation into market. The Nine-Dimension Idea Assessment Model and structured validation are the operational toolkit

- Headcount implication: smaller innovation teams, higher expectations per person, reallocation of effort toward synthesis and execution. Plan for this rather than around it - organizations pretending otherwise will be caught flat

- For the longform argument see The Innovator's Identity Crisis on theinnovationmode.com

Innovation work is not disappearing. A specific shape of it is. Hire and develop for the Intrapreneur.